From Language Models to Reasoning Machines

Dennj Osele

Building the mathematics of intelligence and artificial subjectivity.

Moving AI from black-box statistics to transparent, symbolic understanding to create a math superintelligence.

About Me

I am passionate about intelligence and subjectivity! Since I was a kid, I have had a fascination with how the brain could work and how a machine can create subjective experiences like feeling and sensations.

Video games like chess, showing signs of intelligence, drew me to computers. When I was 14 years old, I got my first computer, a second-hand MS-DOS with Visual Basic installed. I taught myself to write code and created some video games like Snake, learning the language from the preinstalled documentation.

Years later, I earned a degree in Information Engineering, which allowed me to deepen my studies in mathematics, programming, control theory, and machine learning. I began my career at a robotics startup (Oxford Technologies) and later at Amazon AWS, where I trained LLMs for Alexa.

I am now working on teaching AI (LLM and Math World Models) to do math by translating existing math papers into formal computer language code that can be used as a dataset for training models.

Current Work

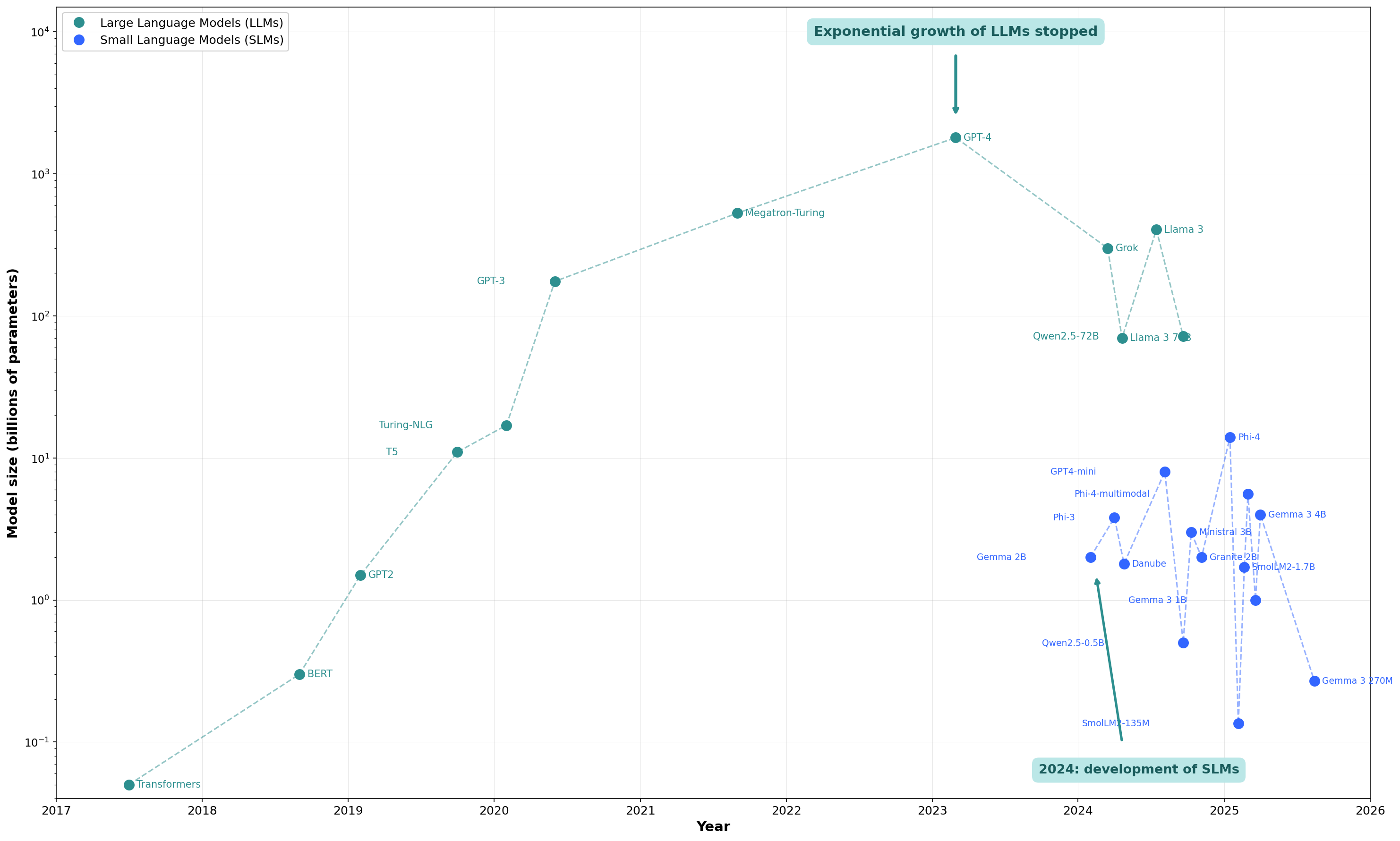

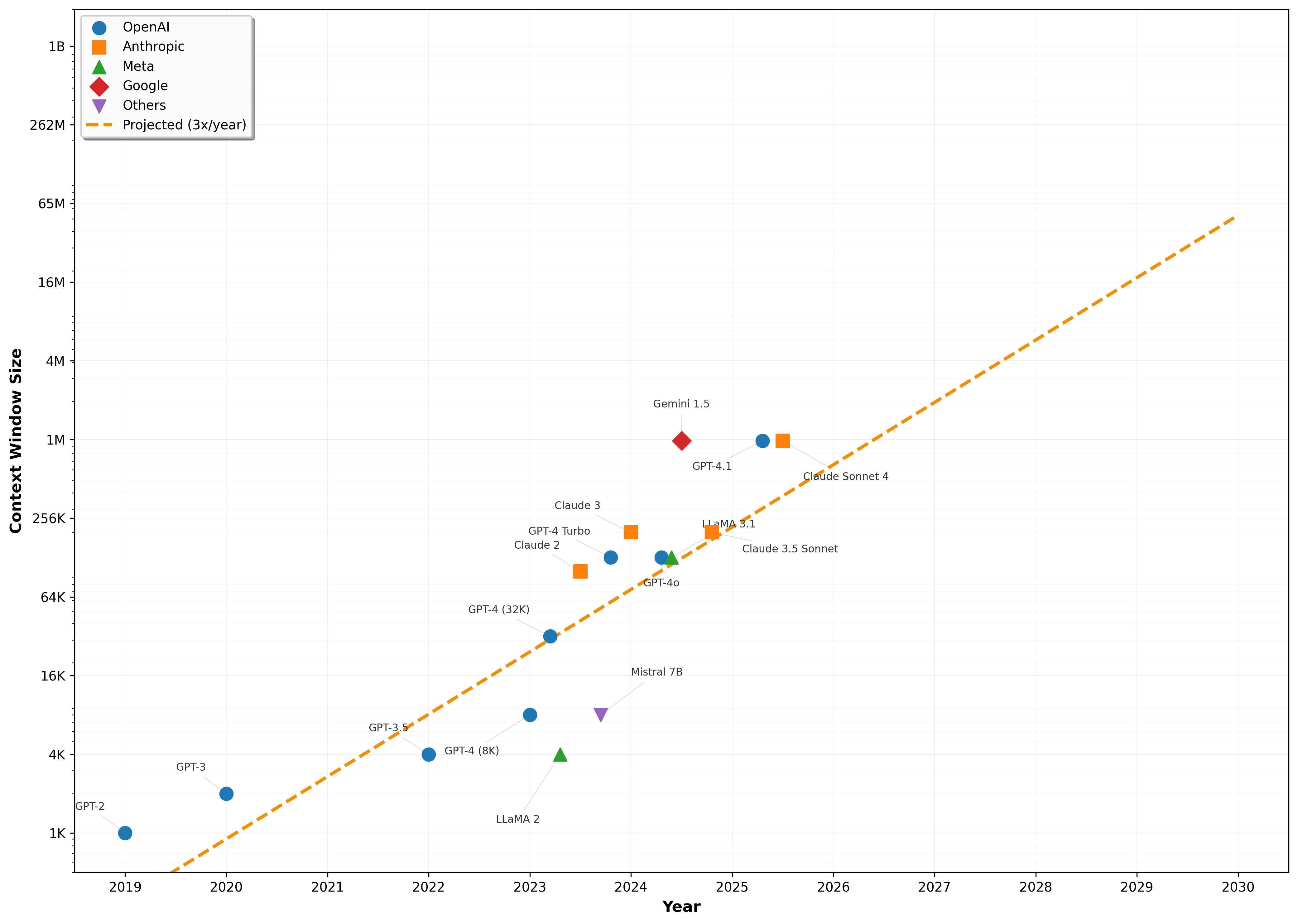

I disagree with the general view that larger LLMs are better; larger LLMs store more knowledge. But with larger context windows, models can load more external knowledge, allowing the AI to focus only on reasoning. This avoids hallucination and frequent retraining, allows the model to run locally on inexpensive hardware, and enables more reasoning on the data.

While frontier models could reach AGI by building larger black boxes, we can instead get there by understanding the math of AI through small, pure reasoning models. Frontier models demand massive data centers, fiber optics, superconductors, and even nuclear-level power, while the human brain runs superior reasoning on a 5-watt device made of water and fat. The problem is clearly not hardware. Biology has discovered a mathematical formula for intelligence that we have not. By training smaller models, we move closer to uncovering that formula and to building pure reasoning systems.

Fundamentally, I believe mathematics is the key to teaching small models to think and for rewriting intelligence from the current neural-network black boxes to a symbolic intelligence that can be explained and understood.

Vision

I. The Language of Reality

Mathematics is the language of the universe; the substrate on which physics, chemistry, the human mind, and everything else is built. Doing math is constructing a symbolic representation of a universal logic that exists in the universe. We discover the fabric of the universe by building symbols.

And an alien civilization from another star would create a different math. But under the surface of symbols, it would be the same math. A universal language of reality itself that divides what is possible from what is not.

II. The Biological Constraint

But millions of years of evolution to survive in the forests did not evolve us to do mathematics. It is unclear why the human brain understands math at all. Rather than a specialized adaptation, mathematics emerges from the same abstract structures that make intelligence possible.

However, we are far from perfect math processors. We can't process the math visualization of a hypercube or understand quantum physics. But still, we are able to write logical constructs with symbols and run them with our brain circuits to use math and physics for our purposes.

Our brain is a poor device for doing math and understanding the universe.

III. The Path to Superintelligence

But recent progress with AI shows that AI already performs as well as the best math olympiad humans. And teaching math to AI, forms neural circuits that strengthen the model's general reasoning skills.

Training AI to do math is the best way forward to create a device capable of superhuman intelligence able to solve open math problems, understand the universe, and progress our scientific discovery.

A Math AI is also the best way to create a model made of pure intelligence without the contamination of knowledge that characterizes LLM. A purely mathematical model encodes the mathematical structure of intelligence and will bring us closer to discovering the mathematics of intelligence itself.

Blog

Why do we need Small Language Models?

Efficiency, privacy, and the path to edge intelligence. Why bigger isn't always better.

Why GPT Context Windows Needs a File System

Managing state and memory in the era of infinite context. The architecture of future AI OS.

Web 4.0: The AI Web

How autonomous agents will reshape the internet and how we consume information.

The First Agentic Commerce Experiment

What we learned from giving an AI money and a supermarket API to spend it.

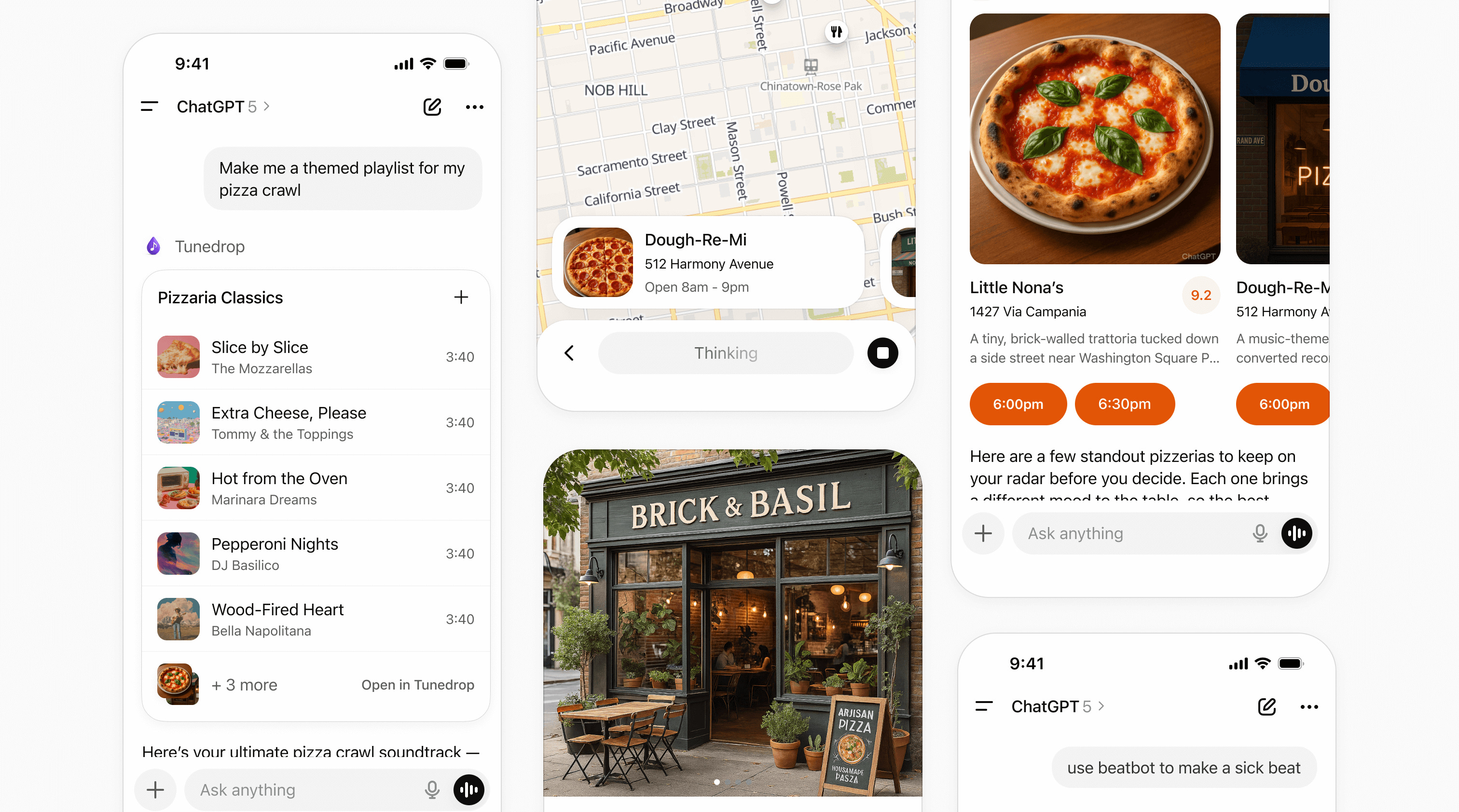

The Next AI Wave: Personal AI & Agentic Commerce

The convergence of personal assistants and automated purchasing protocols.

HTTP 402: The Missing Link

Enabling native payments at the protocol level to fuel the agentic economy.

The End of Mobile Apps

Why AI interfaces will eventually replace the app ecosystem as we know it.

Awards

Solana Demo Day Dublin

HubSpot Pitch Competition UKI

Ethereum Dublin Hackathon

European Solana Demo Day

Let's Connect

Whether you're building an AI startup, looking for strategic advice, or exploring collaboration opportunities — I'd love to hear from you.

dennj.osele@gmail.com